| Fundamentals of Statistics contains material of various lectures and courses of H. Lohninger on statistics, data analysis and chemometrics......click here for more. |

|

Home  Statistical Tests Statistical Tests  Fundamendals Fundamendals  Power of a Test Power of a Test |

|

| See also: Types of Error, Kolmogorov-Smirnov One-Sample Test | |

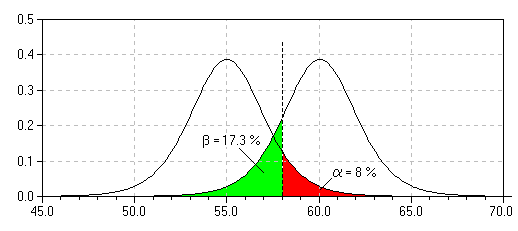

Power of a TestThe power of a statistical test is defined as the probability of correctly rejecting a false null hypothesis. Power is therefore defined as: 1 - β, where β is the Type II error probability. A test with a low power is of little worth, although the level of significance α may be high (the results are inconclusive since the null hypothesis is true only with a low probability).

Note that for a given set-up of an experiment, the power of a test and

the level of significance are related to each other: decreasing the level

of significance α (which is favorable, since

the probability of making an error when rejecting the null hypothesis is

lowered) also decreases the power of a test (which is bad, since the probability

of making a type II error increases). Thus, without changing the experiment,

we have to make a compromise between a low level of significance and the

high power of the test.

|

|

Home  Statistical Tests Statistical Tests  Fundamendals Fundamendals  Power of a Test Power of a Test |

|