| Fundamentals of Statistics contains material of various lectures and courses of H. Lohninger on statistics, data analysis and chemometrics......click here for more. |

|

Home  Bivariate Data Bivariate Data  Regression Regression  Uncorrelated Residuals Uncorrelated Residuals |

|

| See also: Regression - Introduction | |

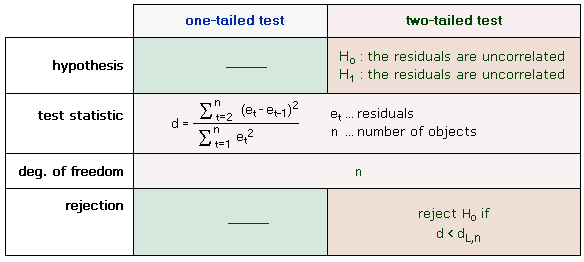

Uncorrelated Residuals - Durbin-Watson TestOne of the prerequisites for linear regression is that the residuals must not be serially correlated. A possible serial correlation of the residuals indicates that the selected regression model does not fully explain the actual relationship. If the residuals are ordered due to an inherently ordered independent variable as this occurs, for example, in time series, we speak of an autocorrelation of the residuals. The correlation of the residuals may lead to one of the following problems:

Although the correlation structure of the residuals may be arbitrarily complex, the most common and simplest case (i.e. the serial correlation of neighboring data values) is easy to check for. The most popular test for serial correlation is the Durbin-Watson test. This test is based on the assumption that consecutive residuals ε are correlated according to the following equation:

εt = ρεt-1 + ωt, ρ is the correlation of the residuals, the weight ω is normally distributed having a mean of 0 and a constant variance. The null hypothesis of the Durbin-Watson test assumes the correlation to be zero:

H0 : ρ = 0

|

|

Home  Bivariate Data Bivariate Data  Regression Regression  Uncorrelated Residuals Uncorrelated Residuals |

|

0

0